Azure Devops Pipelines - Could not get lock /var/lib/dpkg/lock-frontend

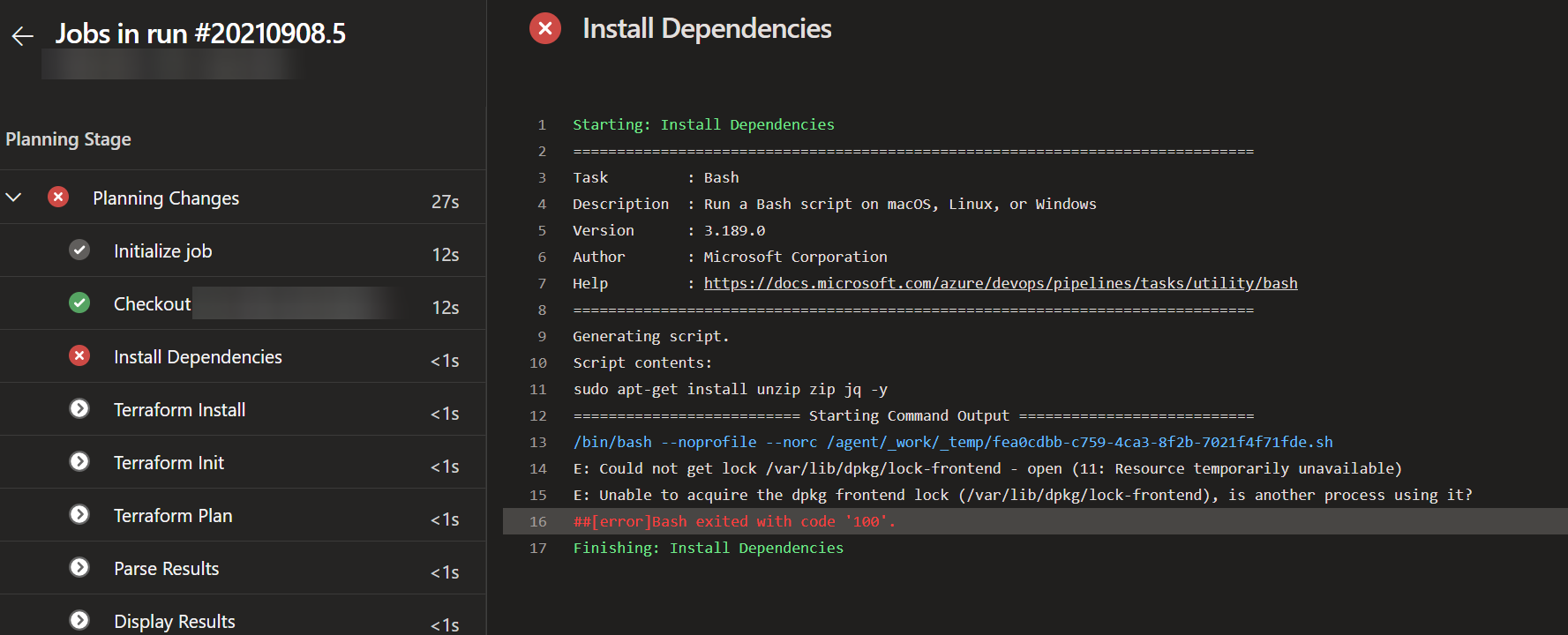

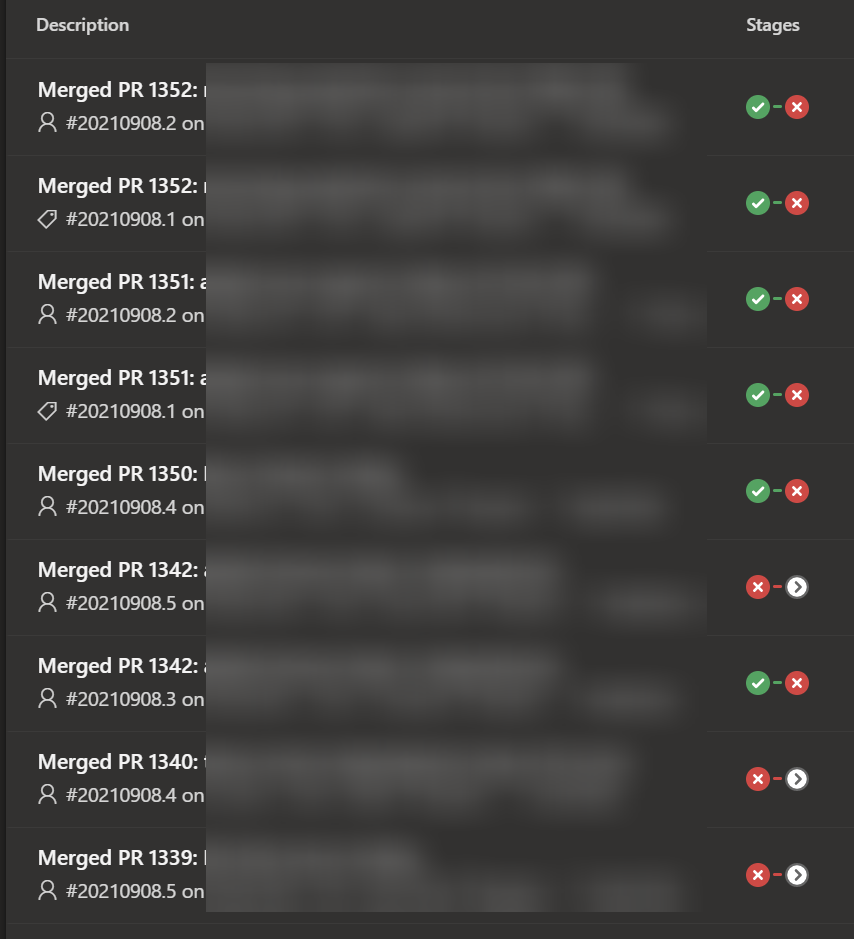

I’ve been using Azure DevOps Pipelines to build our Azure Infrastructure with Terraform for nearly 9 months now and its been pretty much rock solid. However, this week all of a sudden my pipelines started failing if I ran more than 1 build at a time, and all gave this error.

E: Could not get lock /var/lib/dpkg/lock-frontend - open (11: Resource temporarily unavailable)

E: Unable to acquire the dpkg frontend lock (/var/lib/dpkg/lock-frontend), is another process using it?

This was happening at the part of the pipeline where I install some dependencies I need on the ubuntu agent, mainly jq, unzip, zip etc. It was only happening when I ran multiple pipelines at once, so my self hosted VM Scale Sets agents ramped up. If it was a single deployment it was fine.

Seeing your pipelines do this when you are under the pump to deliver a lot of changes is frustrating.

Solution

It turned out to be fairly simple. When the VM Scale Set Instance was starting, something which I think was the Azure DevOps Extension, was using apt-get to get updates, which meant my dependency section couldn’t run apt-get due to the lock and crashed out.

To fix it I added in a wait loop to my dependencies section in the pipeline with the below.

# Install dependencies in the agent pool server

- task: Bash@3

inputs:

targetType: 'inline'

script: |

until sudo apt-get -y update && sudo apt-get install unzip zip jq -y

do

echo "Try again"

sleep 2

done

displayName: 'Install Dependencies'

All that is doing is trying to get the packages with apt-get, and if it can’t do it because of the lock, wait 2 seconds and try again until it can. Simple, but very effective.

So now instead of my dependency check crashing out in <1s if there was a lock, it typically hits a lock for 10 seconds or so, then completes fine and I have had 100% pipeline success since.

Hope that helps!

Andy